The Fourth Industrial Revolution (4IR) is a term used to describe the current and emerging technological advancements that are expected to change the way we live and work. It is characterized by a fusion of technologies that blur the lines between the physical, digital, and biological spheres. The Fourth Industrial Revolution builds on the Third (Digital Revolution) and is characterized by greater interconnectivity and interdependence of systems, resulting in a more dynamic and complex global environment.

Adapting to the Fourth Industrial Revolution requires a proactive approach from governments, businesses, and individuals. This includes investing in education and training programs, developing new policies and regulations, and fostering innovation and collaboration.

In this article, let's learn more about:

- Where did the term “Fourth Industrial Revolution” come from?

- Fourth Industrial Revolution (4IR) Technologies

- Artificial Intelligence (AI) and Machine Learning

- Internet of Things (IoT)

- Robotics and Automation

- Quantum Computing

- Biotechnology and Genomics

- 5G and other advanced communication technologies

- Cloud Computing and Edge Computing

- Blockchain and Distributed Ledger Technology

- Key Takeaways

Where did the term “Fourth Industrial Revolution” come from?

The term "Fourth Industrial Revolution" (4IR) was first coined by Klaus Schwab, the founder and executive chairman of the World Economic Forum (WEF), in his book "The Fourth Industrial Revolution" published in 2016. Schwab used the term to describe the current and emerging technological advancements that are expected to change the way we live and work, just as the first three industrial revolutions did in the past.

Schwab argued that the Fourth Industrial Revolution is different from the previous ones in that it is characterized by a fusion of technologies that blur the lines between the physical, digital, and biological spheres. This fusion of technologies is also creating a more complex and dynamic global environment, with greater interconnectivity and interdependence of systems.

The concept of industrial revolutions is not new, and the first three industrial revolutions were identified as follows:

- The First Industrial Revolution (1760-1840): was characterized by the development of steam power and the mechanization of manufacturing.

- The Second Industrial Revolution (1870-1914): was characterized by the widespread use of electricity, the development of the assembly line, and the mass production of goods.

- The Third Industrial Revolution (1969-1989): characterized by the development of digital technology and the computerization of manufacturing and other industries.

Schwab's use of the term "Fourth Industrial Revolution" has been widely adopted by experts, researchers, and policymakers and has been the subject of much discussion and debate in academic and policy communities.

To respond to the Fourth Industrial Revolution, it is important for individuals and organizations to stay informed about the latest technological developments and to invest in upskilling and reskilling efforts. This can include learning new skills, such as coding or data analysis, or developing a deeper understanding of how new technologies can be used to improve business processes or create new products and services.

It is also important to consider the potential social and ethical implications of these technologies and to work towards creating a more inclusive and equitable society.

For the government, it is important to create policies and regulations that support innovation while also protecting citizens and the environment. This may include investing in research and development, providing education and training opportunities, and implementing regulations to ensure that technology is used ethically and responsibly.

Overall, the Fourth Industrial Revolution presents both opportunities and challenges, and it is important for individuals, organizations, and governments to be proactive in their approach to this rapidly changing landscape.

Let us now look at the key technologies in detail below.

Fourth Industrial Revolution (4IR) Technologies

The Fourth Industrial Revolution (4IR) is driven by a number of technological advancements that are expected to have a significant impact on the way we live and work. The key technologies driving change in the 4IR include:

Artificial Intelligence (AI) and Machine Learning

Artificial Intelligence (AI) refers to the simulation of human intelligence in machines that are programmed to think and learn like humans. It involves the development of algorithms and computer programs that can perform tasks that would normally require human intelligence, such as visual perception, speech recognition, decision-making, and language translation.

Machine learning, on the other hand, is a subset of AI that involves the development of algorithms and statistical models that enable machines to improve their performance with experience. Machine learning algorithms are designed to learn from data, identify patterns, and make predictions without being explicitly programmed.

In summary, AI is a broad field that encompasses various subfields such as natural language processing, computer vision, and expert systems, while machine learning is a specific subfield of AI that deals with the development of algorithms that enable machines to learn from data.

Internet of Things (IoT)

The Internet of Things (IoT) refers to the network of physical objects, such as devices, vehicles, buildings, and other items that are embedded with sensors, software, and connectivity, which enables them to collect and exchange data. The IoT allows these objects to connect and communicate with each other and with the internet, enabling them to be controlled and monitored remotely.

Some common examples of IoT devices include smart home devices like thermostats, security cameras, and door locks; wearables like fitness trackers and smartwatches; industrial equipment and machines; and connected cars.

The IoT has the potential to revolutionize various industries, such as healthcare, transportation, manufacturing, and agriculture, by enabling more efficient, cost-effective, and safer operations. However, IoT also raises significant concerns about privacy and security as the data collected by these devices may be sensitive and, if not properly secured, could be vulnerable to cyber-attacks.

Robotics and Automation

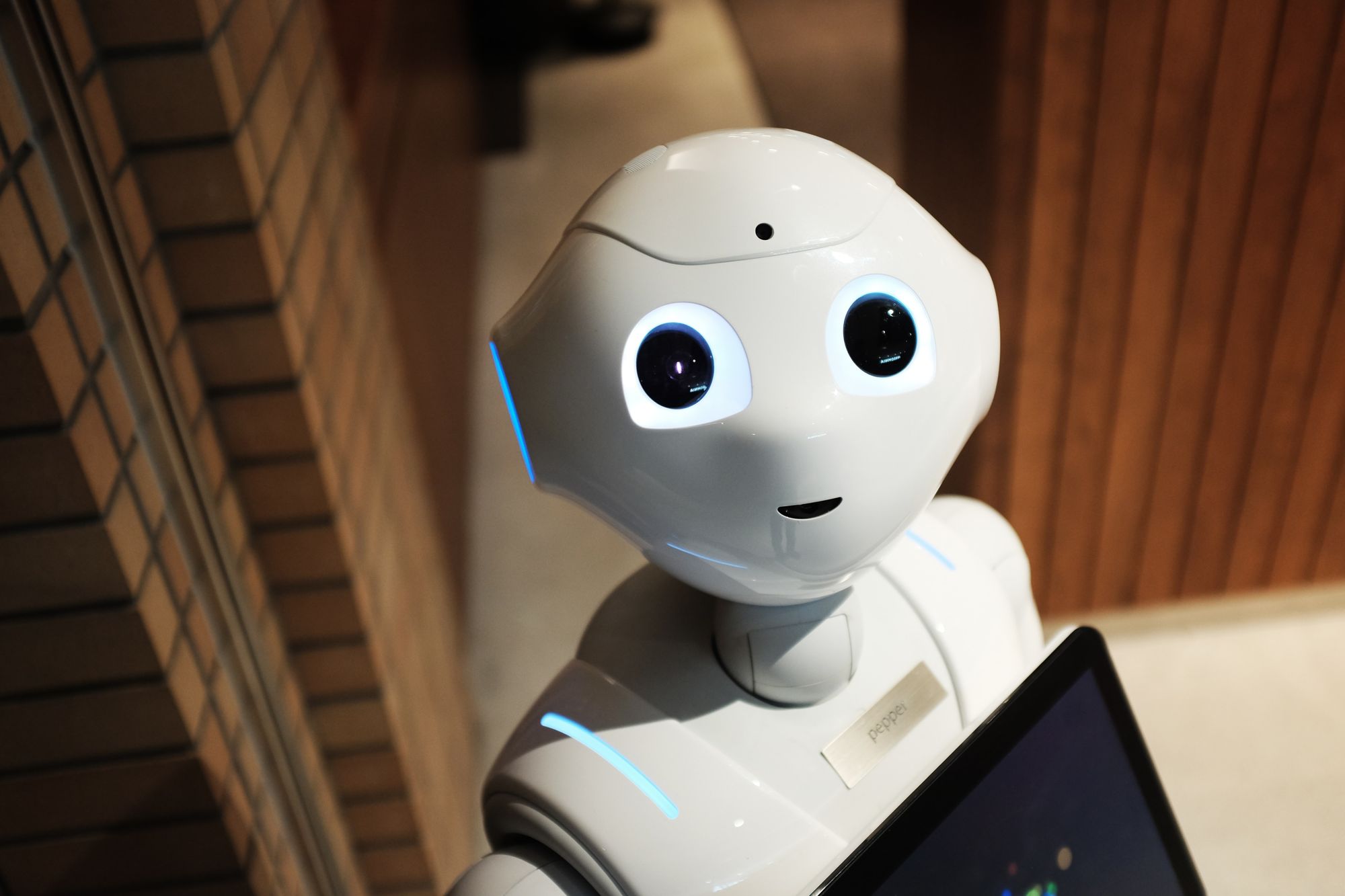

Robotics and automation technologies are being used to automate repetitive tasks, improve accuracy and quality, and increase efficiency and productivity. Robotics is the branch of engineering and science that deals with the design, construction, operation, and use of robots. Robotics involves the integration of mechanical, electrical, and computer engineering, as well as other fields, such as artificial intelligence and machine learning, to create machines that can sense, reason, and act in the physical world.

Automation, on the other hand, refers to the technology by which a process or procedure is performed without human intervention. Automation can be achieved through the use of control systems, such as programmable logic controllers (PLCs) or industrial robots.

Robotics and automation are closely related, as robotics is often used to automate tasks that are too dangerous, difficult, or repetitive for humans to perform. Robotics and automation have many applications in various fields, such as manufacturing, transportation, medicine, and space exploration. Both Robotics and automation have the potential to increase efficiency, productivity and safety, but it also has the potential to displace human workers in certain industries, leading to job loss and other socio-economic impacts.

Quantum Computing

Quantum computing is a new type of computing that uses quantum-mechanical phenomena, such as superposition and entanglement, to perform operations on data. Quantum computing is a field of computer science and technology that uses the principles of quantum mechanics to process and store information. Unlike classical computers, which use binary digits (bits) to represent information, quantum computers use quantum bits, or qubits, which can exist in multiple states simultaneously. This allows quantum computers to perform certain computations much faster than classical computers.

Quantum computing has the potential to solve problems that are currently infeasible for classical computers, such as breaking encryption codes and simulating complex chemical reactions. It has potential applications in areas such as drug discovery, finance, and machine learning.

There are different types of quantum computing architectures, such as gate-based, topological, and adiabatic quantum computing, each with its own advantages and disadvantages.

Quantum computing is still in its early stages of development, and commercial quantum computers are not yet widely available. The development and implementation of quantum computing also face various challenges, such as dealing with errors and decoherence and developing algorithms and software that can take advantage of the unique properties of quantum computers.

Biotechnology and Genomics

Biotechnology and genomics are the application of technology to the study of living organisms and their functions. Advancements in biotechnology and genomics are enabling researchers to better understand the human body and disease and to develop new therapies and treatments.

Biotechnology is the use of living organisms, cells, or biological systems to develop products and technologies that improve human health, agriculture, and the environment. It encompasses a wide range of fields, such as genetic engineering, biochemistry, microbiology, and medical research.

Genomics is the study of an organism's complete set of genetic material, including its DNA sequence, structure, and function. It is an interdisciplinary field that combines biology, computer science, and statistics to analyze and understand the genetic makeup of organisms.

Genomics is a rapidly growing area of biotechnology that has had a major impact on many fields, such as medicine, agriculture, and environmental science. Applications of genomics include the development of new drugs and therapies, the improvement of crop yields and disease resistance, and the understanding of the genetic basis of human diseases.

The rapid advancement in genomics technologies, such as high-throughput DNA sequencing and bioinformatics, have made it possible to generate and analyze large amounts of genetic data. However, the field also raises ethical concerns about issues such as genetic privacy, discrimination, and the use of genetic information in insurance and employment.

5G and other advanced communication technologies

5G technology is the next generation of mobile networks that will provide faster download and upload speeds, lower latency, and more reliable connections.

5G is the fifth generation of cellular mobile communications, which is designed to provide faster speeds, lower latency, and increased capacity compared to previous generations of cellular technology (such as 4G). 5G networks use a combination of technologies such as millimeter wave (mmWave) spectrum, massive MIMO (multiple input, multiple outputs), and beamforming to achieve these improvements.

5G has the potential to enable new use cases and applications such as autonomous vehicles, smart cities, and the Internet of Things (IoT), as well as to provide more reliable and faster mobile broadband service.

Other advanced communication technologies include:

- Li-Fi (Light Fidelity): it's a wireless communication technology that uses visible light to transmit data, offering higher speeds and more secure communication than Wi-Fi.

- Software-Defined Networking (SDN): it's an approach to networking that allows the control plane (the part of the network that makes decisions about how data is sent) to be separated from the data plane (the part of the network that actually forwards the data). This allows for more flexibility and programmability in network management software.

- Visible Light Communication (VLC): it's a method of transmitting data using the visible light spectrum. It can be used in environments where radio frequency (RF) communication is not possible or desirable, such as in hospitals or airplanes.

- Cognitive Radio (CR): it's a type of wireless communication in which the radio can adapt its transmission and reception parameters to optimize communication and avoid interference with other devices.

All these technologies are at different stages of development and may have different levels of commercial availability, but they all have the potential to improve communication speed, reliability, and security and to enable new and innovative applications.

Cloud Computing and Edge Computing

Cloud computing refers to the delivery of computing services over the internet, while Edge computing refers to the distribution of computing power closer to the data source.

Cloud computing refers to the delivery of computing services—including servers, storage, databases, networking, software, analytics, and intelligence—over the Internet (“the cloud”) to offer faster innovation, flexible resources, and economies of scale. Cloud computing allows users to access their data and applications from anywhere, and to scale their resources up or down as needed. There are three main types of cloud computing:

- Infrastructure as a Service (IaaS): It provides users with virtualized computing resources such as servers, storage, and networking.

- Platform as a Service (PaaS): It provides users with a platform for developing, running, and managing applications without the need to manage the underlying infrastructure.

- Software as a Service (SaaS): It delivers software applications over the internet, on a subscription basis.

On the other hand, Edge computing is a distributed computing paradigm that brings computation and data storage closer to the devices that generate and consume the data. It enables data processing at the edge of the network, near the source of the data, rather than sending all the data to a central location for processing. This allows for faster and more efficient processing, as well as reducing the amount of data that needs to be transmitted over the network.

Edge computing can be used in various applications such as IoT, autonomous vehicles, and industrial automation, where low latency, high-bandwidth, and high reliability are required.

Both Cloud computing and Edge computing are important and have their own advantages and disadvantages. Cloud computing provides the benefit of scalability, cost-efficiency, and access to a wide range of resources, while Edge computing provides the benefit of low latency, high-bandwidth and real-time data processing.

Blockchain and Distributed Ledger Technology

Blockchain is a digital ledger of transactions that is distributed across a network of computers, making it difficult to alter or hack.

Blockchain is a distributed ledger technology (DLT) that allows multiple parties to maintain a shared, tamper-proof record of digital transactions. It is a decentralized and distributed system where every participant in the network maintains a copy of the ledger. Each block in the chain contains a set of transactions, and once a block is added to the chain, the transactions it contains cannot be altered.

The most well-known application of blockchain is Bitcoin, the first decentralized digital currency, which uses blockchain technology to record and verify transactions. But Blockchain technology has many other potential use cases, such as supply chain management, voting systems, digital identity, and smart contracts.

Distributed Ledger Technology (DLT) is a broader term that includes blockchain and other technologies that allow multiple parties to maintain a shared, tamper-proof record of digital transactions across a network of computers. DLT can be used for various applications in different industries such as finance, supply chain, healthcare, and government.

Blockchain technology is still relatively new, and the full range of its potential is yet to be fully realized. It also faces challenges such as scalability, regulatory compliance, and interoperability with existing systems.

In summary, Blockchain is a specific type of Distributed Ledger Technology that uses a chain of blocks to record and verify digital transactions in a decentralized and tamper-proof way, while Distributed Ledger Technology is a broader term that refers to any technology that allows multiple parties to maintain a shared, tamper-proof record of digital transactions.

Key Takeaways

Some of the key technological trends driving the Fourth Industrial Revolution include:

- Artificial Intelligence (AI) and machine learning

- Internet of Things (IoT)

- Robotics and automation

- Quantum computing

- Biotechnology and genomics

- 5G and other advanced communication technologies

- Cloud computing and edge computing

- Blockchain and distributed ledger technology

These technologies are expected to have a profound impact on the economy, the workforce, and the way we live and interact. They bring many opportunities for innovation, productivity, and improved quality of life but also pose significant challenges, including job displacement, cybersecurity risks, and potential social and ethical issues.

Related Articles